February 11, 2026 at 12:00 PM ET

Webinar Recap: Developing Acceptance Pathways

Integrating AI into Research Development Workflows

Access Webinar Recording

Please fill out this form to access the recorded webinar.

Artificial intelligence is no longer a future consideration for research development. It is already here. Faculty are using it, students are using it, and team members are likely experimenting with it quietly. The question facing Research Development Officers today is not if they should engage with these tools, but how to engage with them responsibly.

In a recent webinar hosted by Atom Grants, Michelle Davis, a Research Development Officer at Texas A&M AgriLife Research, shared a practical, function focused framework for adopting AI. Here is a breakdown of her approach to navigating AI adoption without compromising compliance, privacy, or professional judgment.

The Function Focused Approach

The conversation around AI often devolves into debates about which chatbot is best. Davis suggests moving upstream from those technical debates. Instead of starting with a tool, start with the workflow.

This approach is tool agnostic. It focuses on identifying tasks, workflows, and pain points, then asking where technology can genuinely reduce administrative burden. The goal is to build a mental model for decision making, rather than simply checking off a list of software to buy.

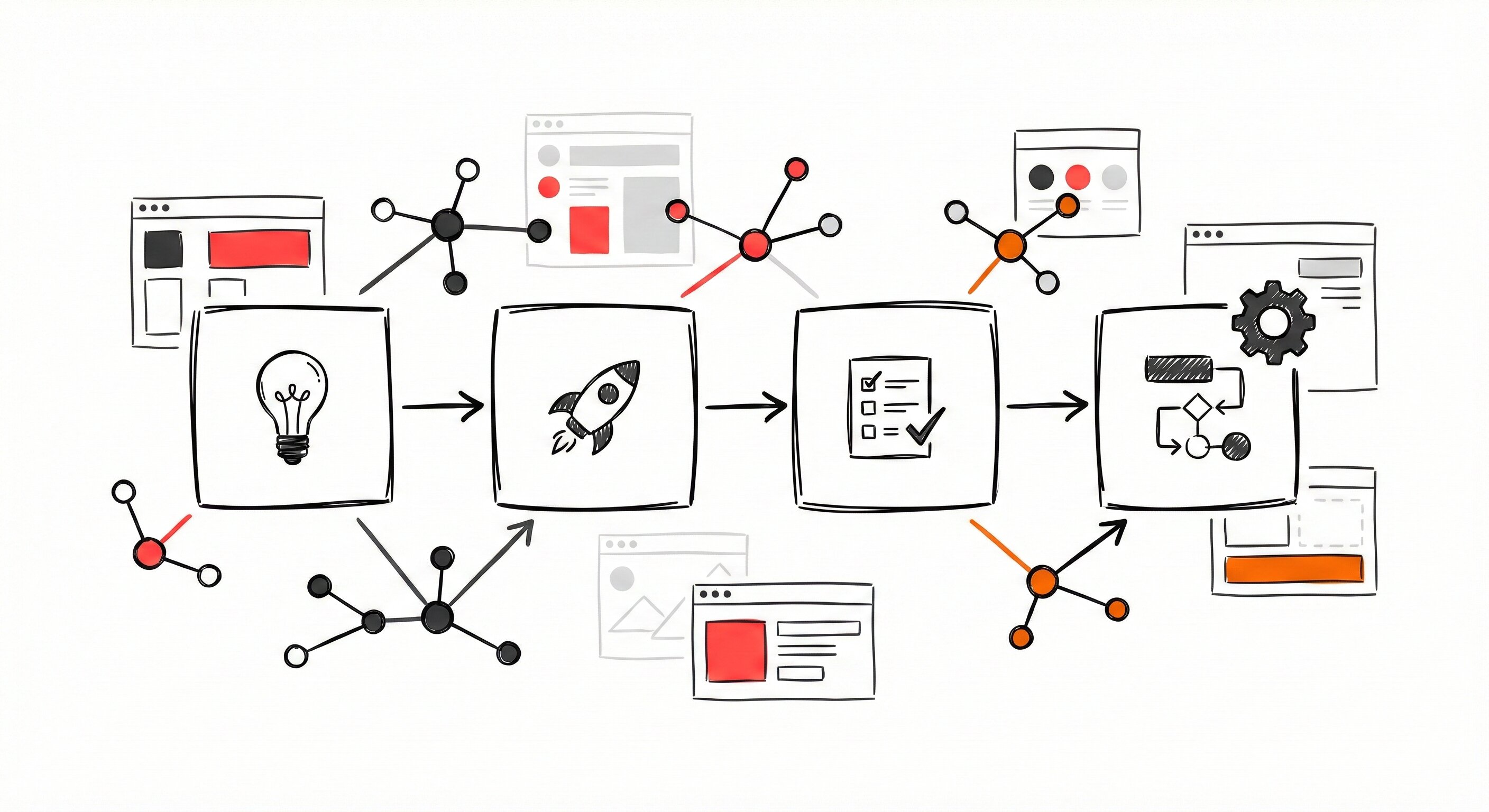

The 4 Stage Acceptance Pathway

To move from hesitation to integration, Davis outlined a four stage framework designed to follow the technology adoption lifecycle.

1. Awareness

This stage goes beyond knowing AI exists. It involves educating stakeholders, dispelling misconceptions, and building a shared language for discussing these tools within your specific context.

2. Pilot

Once awareness is established, move to small scale, low risk trials. Do not roll out a tool across the entire operation immediately. Test a specific tool on a specific workflow with a single team to learn what works and what adjustments are needed.

3. Validation

This is the governance step, and often takes the most time. Validation is not just a technical test; it is about policy alignment. You must ask: Does this meet our standards? Does it preserve professional accountability? Is this appropriate for our context?

4. Normalization

Only after validation does a tool become part of the routine. In this stage, the technology is no longer an experiment but an integrated element of operations, supported by clear documentation and guardrails.

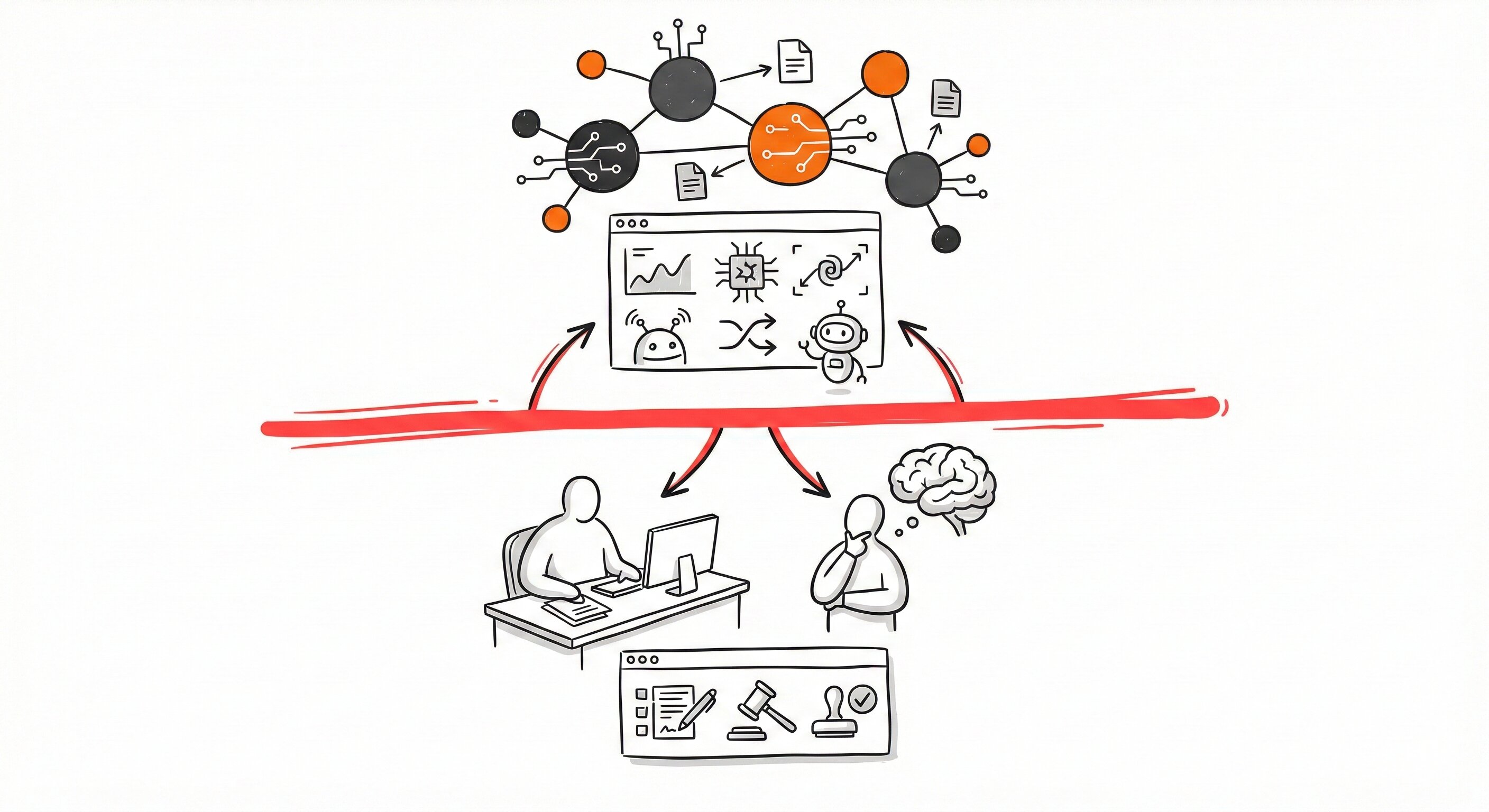

Defining Red Lines and Human Accountability

A critical component of this framework is defining "red lines" or boundaries where AI assistance ends and human judgment must take over.

For example, in a funding opportunity search, AI can handle the scanning and synthesis, but the human RDO must retain the authority to determine relevance, eligibility, and sponsor alignment. These boundaries protect the institution and ensure that AI is used defensively and appropriately.

Real World Use Case: Funding Searches

Davis shared a successful use case regarding the time intensive process of scanning funding announcements.

- The Problem: Traditional manual keyword searches and iterative filtering consumed significant time.

- The AI Solution: Using AI for semantic searches and rapid synthesis of opportunities.

- The Outcome: The pilot resulted in increased coverage of funding sources without adding headcount, faster turnaround times for faculty inquiries, and improved opportunity matching based on concepts rather than just keywords.

Most importantly, this shift freed up RDOs to focus on high value work like proposal development, faculty consultation, and strategic planning. This is the work that actually moves the needle for the institution.

Overcoming Resistance and Privacy Concerns

Resistance to change is natural. Davis noted that resistance is not necessarily tied to demographics like age, but rather to "perceived usefulness". If you can demonstrate that a tool effectively solves a specific pain point, users are more likely to accept it.

Regarding privacy, Davis emphasized the importance of using enterprise level internal systems rather than public tools. At Texas A&M AgriLife, they utilize an internal system with modules for tools like GPT 4 and Claude to ensure data remains secure, highly discouraging the use of external public systems for sensitive work.

Access Michelle's Presentation

To dive deeper into the framework and visual models discussed in the webinar, you can access Michelle Davis's full slide deck directly below.

Download Michelle's Presentation: Developing Acceptance Pathways

Moving Forward

The future of AI in research development relies on intentional trust building. Handing someone a tool and saying "figure it out" is rarely successful. Success comes from creating internal playbooks, providing training, and implementing small, scalable pilots that respect the professional judgment of the team.

Book a demo to see how Atom Grants can support your research development workflows.

Michelle Davis

Research Development Officer, Texas A&M AgriLife Research

Michelle is a Research Development Officer at Texas A&M AgriLife Research, where she pioneers the responsible integration of AI into research administration. Moving beyond the hype of chatbots, she advocates for a "function-focused" approach that prioritizes workflows and administrative relief over technical debates. Her "Acceptance Pathways" framework provides institutions with a practical roadmap to navigate compliance, privacy, and human judgment in the age of AI. Michelle is dedicated to helping RDOs transition from hesitation to normalization, ensuring technology acts as a force multiplier for high-value research support.

Tomer du Sautoy

Co-Founder & CEO, Atom Grants

Helping universities modernize research development with AI to reduce admin burden, increase faculty engagement, and improve proposal success.

LinkedIn: https://www.linkedin.com/in/tomer-du-sautoy/

Contact: tomer@atomgrants.com

Location: New York