April 1, 2026

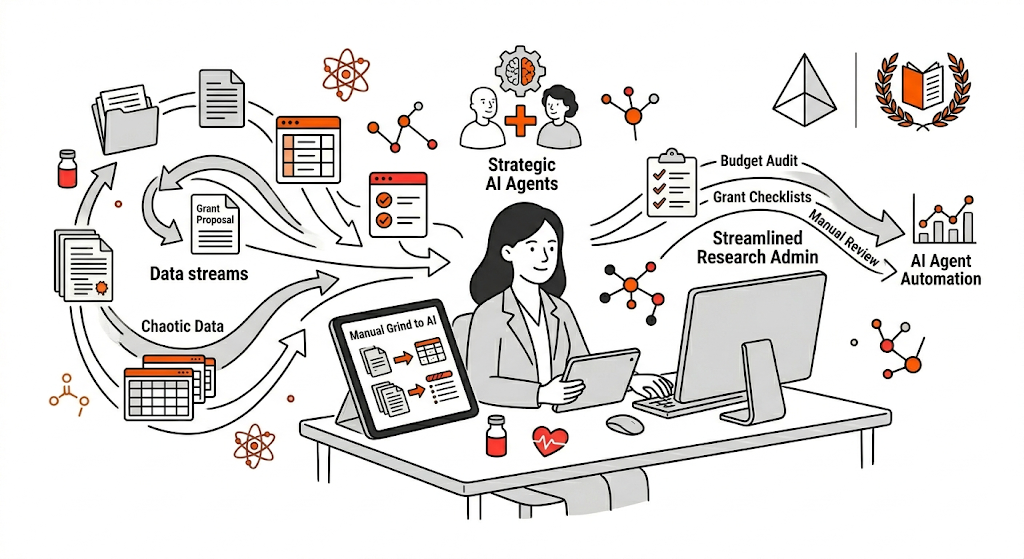

Don't Replace Expertise, Amplify It

A New Approach to AI in Research Admin

For 25 years, Erika Cottingham has lived in the trenches of research administration. After mastering the grants landscape at institutions across the eastern United States, she's now advocating for a radical shift: moving from manual grind to strategic leverage using AI. At Auburn University, she's mobilizing her team to build AI agents that automate checklists and audit budgets while conducting large-scale research on how her community actually wants to use AI responsibly.

Meet Erika Cottingham

Erika Cottingham is the Director of Research Program Development, Grant and Contract Administration at Auburn University. Her journey is a masterclass in resilience: she started as a program coordinator who taught herself grant writing, then spent over two decades moving through leadership roles at Cincinnati Children's Hospital, UNC Charlotte, Emory University, Morehouse School of Medicine, and Columbus State University before taking on her current role overseeing research administration across Auburn's divisions.

What sets her apart is not just her breadth of experience, but her conviction that the field is drowning. And instead of accepting that as normal, she's decided to do something about it.

The Proposal Development Challenge

Most research offices have gotten good at finding funding. The tools are there. But proposal development, the phase where strategy meets storytelling, remains a bottleneck that no one has figure out how to fix.

Why? Because proposal development is where everything collides: sponsored language, budget architecture, compliance, narrative framing, and institutional priorities. For decades, institutions have expected human beings to manage this complexity alone using email threads, spreadsheets, and shared documents.

Erika calls this "cognitive friction."

It's the mental exhaustion that comes from spending hours editing forms, cross-referencing compliance details, and reformatting documents, tasks that steal energy from the actual work that matters: strategy, storytelling, and persuasion.

What Research Admins Really Want From AI

To understand what her community actually needed, Erika and her colleague Eric Martinez at University of Texas Rio Grande Valley sent out a survey. They expected maybe 10 responses.

They got 150.

The responses came from across the entire research lifecycle, pre-award, proposal development, post-award, and everything in between. And the data was clear: 85% of respondents did not want AI to replace them. They wanted it to help them think faster and reduce their mental load.

But the survey also revealed fears. Hallucinations. Security concerns. Loss of voice. Ethical gray areas. Institutional policy gaps.

Erika understood both sides. The technology was moving fast. Models were improving. But if there was fear of AI replacing you, the logical move was to grab hold of it and be part of shaping it, not assume it would take your spot.

Creating AI Agents That Don't Replace Expertise

At Auburn, Erika's team has begun building practical AI agents. Two examples stand out:

The Funding Opportunity Checklist Agent

When a faculty member submits a funding opportunity, an AI agent analyzes it and builds a preliminary checklist of requirements. Cost share mentioned? Check. Required or optional? The agent flags it. But here's the key: a human then interprets that language, determines the timeline, and fleshes out what the process actually looks like.

The agent didn't replace the pre-award specialist. It freed up their time to focus on higher-leverage work.

The Budget Narrative Alignment Agent

Late-stage budget changes often break the narrative. An AI agent compares the budget to its corresponding narrative and surfaces misalignments that would otherwise slip through. Again: the agent catches the mechanical work. The human makes the judgment call.

Safety First: Building Guardrails Without Paralysis

Erika is blunt about this: if your university is not concerned about sensitive data going into public models, that's a red flag. But being afraid is not a strategy.

She advocates for two types of guardrails:

Institutional Guardrails:

- Clarity: What tools are already approved? Does your institution have enterprise versions with data protection agreements?

- Define off-limits content: unpublished data, proprietary methods, human subjects data, export-controlled materials

- Establish use case boundaries: AI can synthesize and summarize. It should not ingest full confidential proposals.

- Normalize redaction: teach teams to abstract before they prompt. Describe the budget structure instead of uploading the real budget.

Ethical Guardrails: Distinguish between data privacy and research security. The latter includes protecting unpublished results, strategic framing, and IP from unauthorized access or foreign interference.

At Auburn, all team members go through rigorous training via their Tiger Tech Team Leaders program before they're cleared to innovate with technology.

Redefining What "The Work" Actually Means

Research administrators have built their identity on mastery: knowing the guidelines, catching compliance landmines, grinding through details. When AI enters, it feels like outsourcing competence. But that mindset belongs to an era where effort and value were tightly coupled. They no longer are.

The work is not manually reformatting biosketches for the 15th time. The work is strategic thinking, risk assessment, alignment, anticipating reviewers, coaching investigators, and protecting the institution.

Friction is just inefficiency we've normalized.

Getting Started

Start small. Use AI to summarize a funding opportunity you've already read, then compare. This reveals a crucial truth: your expertise is still required. AI handles the mechanical work. You interpret the nuance.

The moment you realize AI saves 30 minutes without compromising judgment, resistance softens. It stops feeling like cheating. It starts feeling like leverage.

What's Next

Auburn's goal: double research expenditure over five years with 15% year-over-year growth. No budget for new staff. The only option is efficiency. AI becomes not optional, but necessary.

Erika is speaking at conferences throughout 2026, showing other institutions a better way.

Watch the Video

Watch the full conversation here: Full Conversation

Using Atom's AI System to Streamline Funding Discovery

While individual workflows are a powerful state, institutional success requires a scalable system. Many offices face the same recurring pain points:

- Manual searching: Spending hours sifting through messy government databases.

- Outdated spreadsheets: Managing opportunity lists that are irrelevant by the time they reach faculty.

- Administrative burnout: Researchers spending more time seeking money than doing science.

If you are struggling with these bottlenecks, take a look at our case studies to see how peer institutions use Atom Grants to move from manual coordination to automated, high-impact research development. To see how our platform can modernize your specific office, book a demo with our team today. To learn more about these specific AI workflows and tools, visit aimeeoke.ai or get in touch with her team.

About Erika

Erika Cottingham, M.Ed., CRA

Erika Cottingham, M.Ed., CRA

Director of Research Program Development, Contract & Grant Administration, Auburn University. Erika is a research administrator with over 25 years of experience transforming research offices across leading institutions including Cincinnati Children's Hospital, UNC Charlotte, Emory University, Morehouse School of Medicine, and Columbus State University. She has spent two decades mastering the intersection of grants management, compliance, and institutional strategy—work that taught her where AI can genuinely help and where it creates new risks. Unlike administrators paralyzed by fear of AI replacing them, Erika sees the technology as a tool to eliminate cognitive friction and free her teams to focus on high-leverage strategy. At Auburn, she's building practical AI agents that catch mechanical errors in budgets and compliance checklists while keeping humans in charge of judgment calls. She regularly speaks at national conferences on responsible AI implementation in research administration.

LinkedIn: https://www.linkedin.com/in/erika-cottingham/

About the Author

Raphaël Bernier

Raphaël Bernier

Head of Growth, Atom Grants

Helping universities modernize research development with AI to reduce admin burden, increase faculty engagement, and improve proposal success.

LinkedIn: https://www.linkedin.com/in/raph-bernier/

Contact: raphael@atomgrants.com

Location: New York